Lab 11: Interactions With Categorical Predictors

PSYC 7804 - Regression with Lab

Spring 2025

Today’s Packages and Data 🤗

emmeans package (Lenth et al., 2025) is a great package for exploring the results of many linear, generalized linear, and mixed models. it also helps compute mean contrasts, trends, and comparisons of slopes. I discover some new functionality every time I look at this package!

What are we looking at Today?

In Lab 10 we looked at categorical predictors and a bunch of coding schemes for categorical variables, but we will stick to dummy coding for this lab.

We eventually want to look at whether gender (\(Z\)) moderates how socst (\(X\)) score predicts write (\(Y\)) score. As a reminder:

-

gender: student gender (either male or female). -

socst: score on a standardized social studies assessment. -

write: score on a standardized writing assessment.

So for the dummy coded gender variable, \(0 =\) female and \(1 =\) male

Starting from The Top

First let’s start with a regression with the gender only predicting write score. We saw this in Lab 10 already.

Call:

lm(formula = write ~ gender, data = hsb2)

Residuals:

Min 1Q Median 3Q Max

-19.991 -6.371 1.879 7.009 16.879

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 54.9908 0.8797 62.509 < 2e-16 ***

gendermale -4.8699 1.3042 -3.734 0.000246 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 9.185 on 198 degrees of freedom

Multiple R-squared: 0.06579, Adjusted R-squared: 0.06107

F-statistic: 13.94 on 1 and 198 DF, p-value: 0.0002463\[\widehat{\mathrm{write}} = 54.99 - 4.86 \times \mathrm{gender} \]

We looked at the interpretation for this kind of regression in the last lab:

-

\(54.99\) is the expected mean

writescore of females (which is coded as \(0\)). -

\(-4.86\) is the expected mean difference in

writescore for males compared to females.

Thus, there is a significant difference in write score between the two groups, with males being \(-4.86\) lower on average.

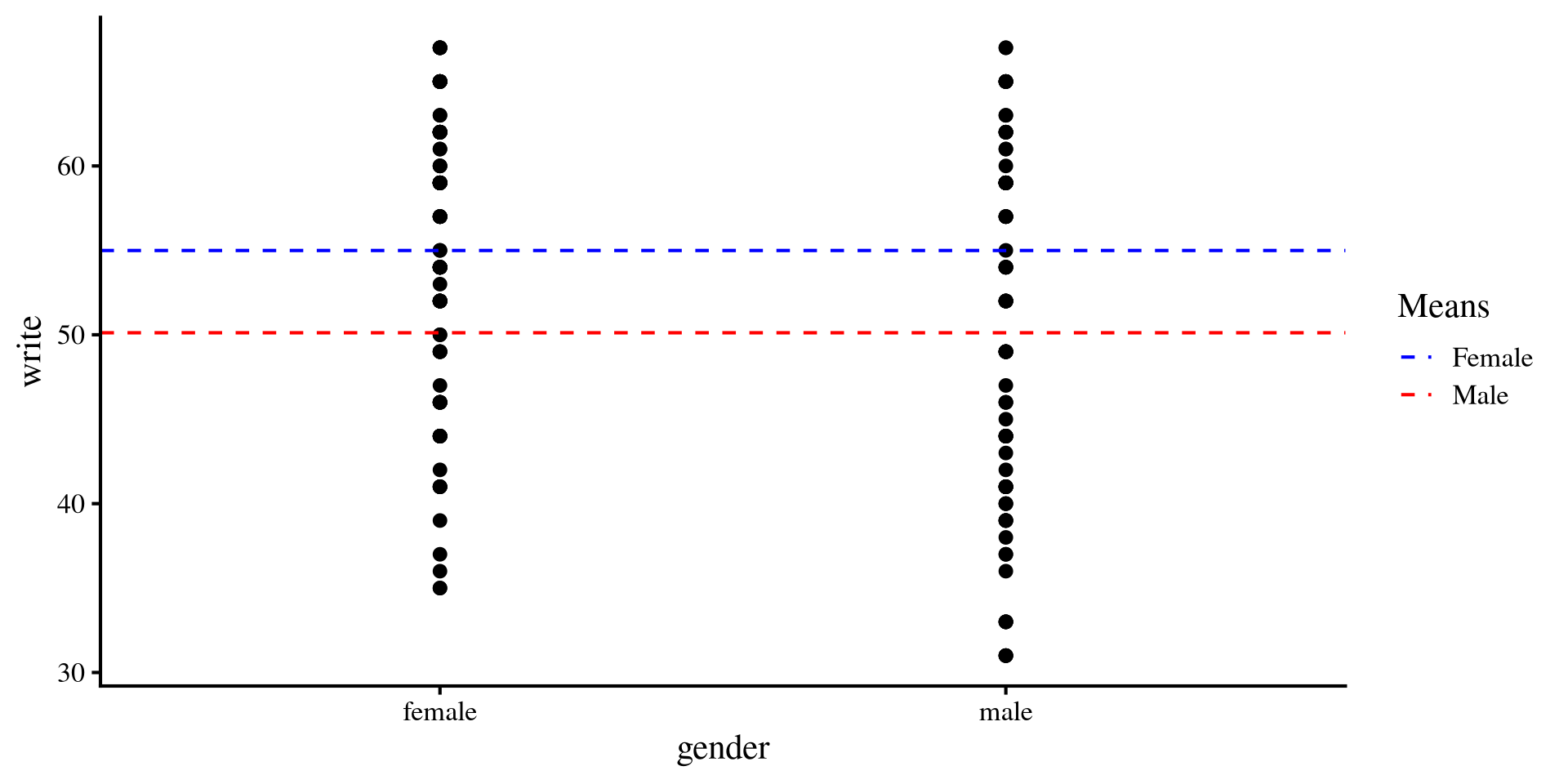

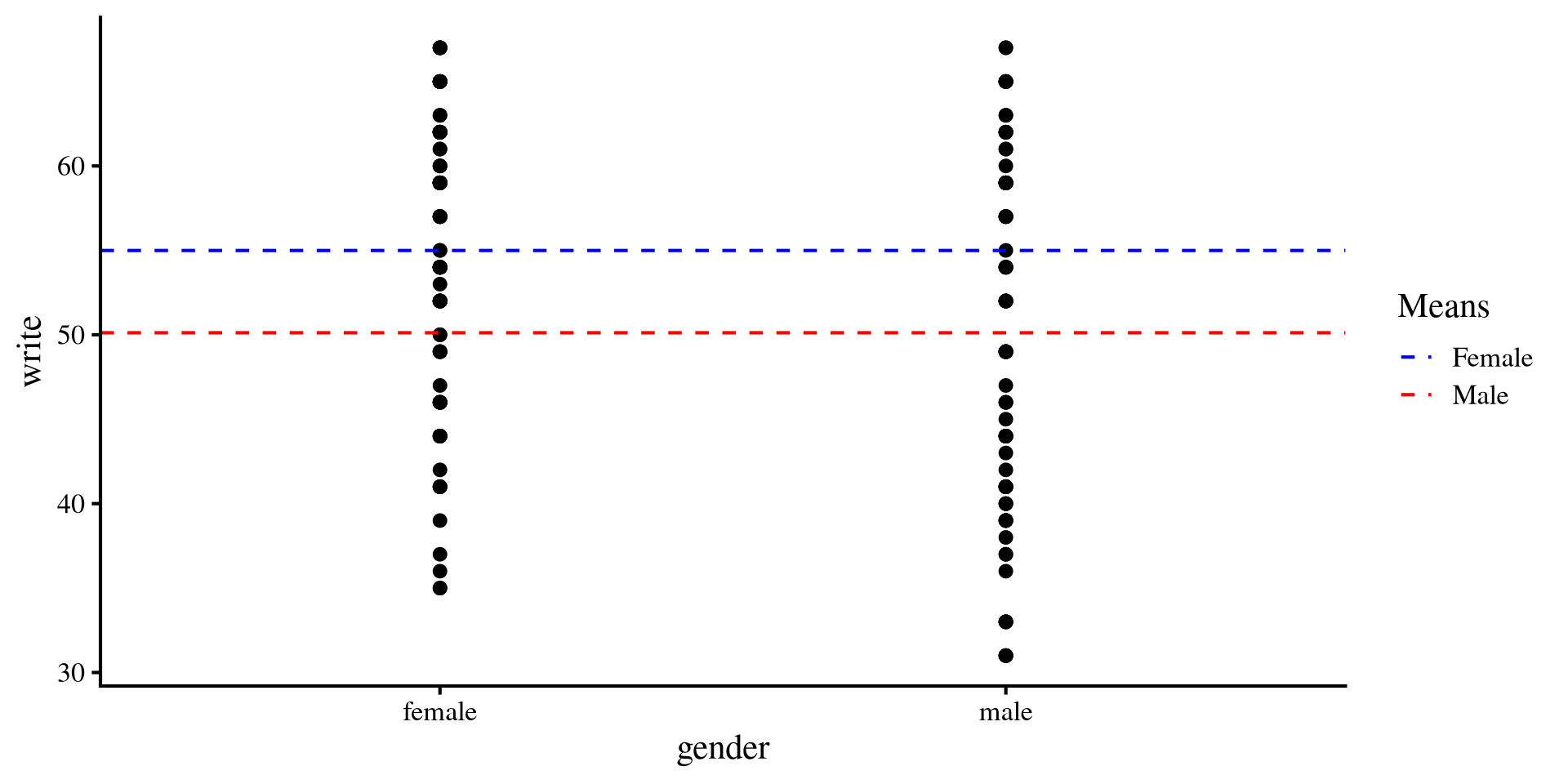

Visualizing write ~ gender

Once again, we can visualize the mean differences between the two groups.

This is nothing new, but it is interesting to see what happens to this plot once we add a continuous predictor to our regression.

Plot code

mean_female <- mean(hsb2$write[hsb2$gender == "female"])

mean_male <- mean(hsb2$write[hsb2$gender == "male"])

ggplot(hsb2, aes(x = gender, y = write)) +

geom_point() +

geom_hline(aes(yintercept = mean(mean_female), color = "Female"),

linetype = "dashed") +

geom_hline(aes(yintercept = mean(mean_male), color = "Male"),

linetype = "dashed") +

scale_color_manual(values = c("Female" = "blue", "Male" = "red")) +

labs(color = "Means")

Adding a continous Predictor

We further hypothesize that socst should be positively related to write score.

Call:

lm(formula = write ~ gender + socst_cent, data = hsb2)

Residuals:

Min 1Q Median 3Q Max

-18.7066 -4.6302 -0.2224 5.2485 18.4840

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 54.72254 0.69748 78.458 < 2e-16 ***

gendermale -4.28032 1.03479 -4.136 5.22e-05 ***

socst_cent 0.52355 0.04812 10.880 < 2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 7.277 on 197 degrees of freedom

Multiple R-squared: 0.4165, Adjusted R-squared: 0.4105

F-statistic: 70.3 on 2 and 197 DF, p-value: < 2.2e-16\[\widehat{\mathrm{write}} = 54.72 - 4.28 \times \mathrm{gender} + .52 \times \mathrm{socst}\]

Now that we have a continuous predictor, things are slightly different:

-

\(54.72\) is now the expected mean

writescore of females, whensocstis at its mean value. -

\(-4.28\) is the expected mean difference in

writescore for males compared to females. whensocstis controlled. -

\(.52\) is the expected mean increase in

writefor each unit increase insocst. Unsurprisingly,writeandsocstare positively related.

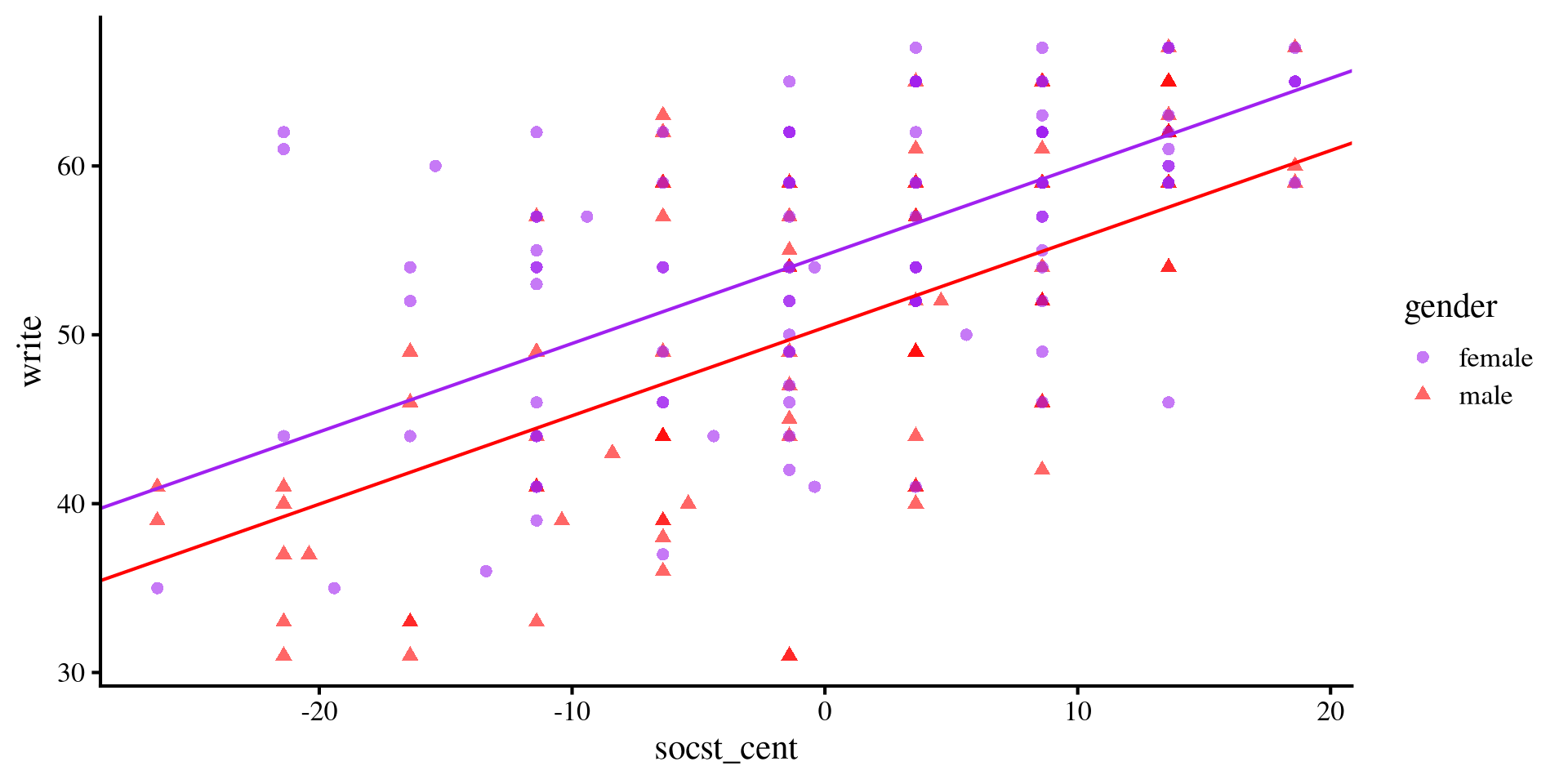

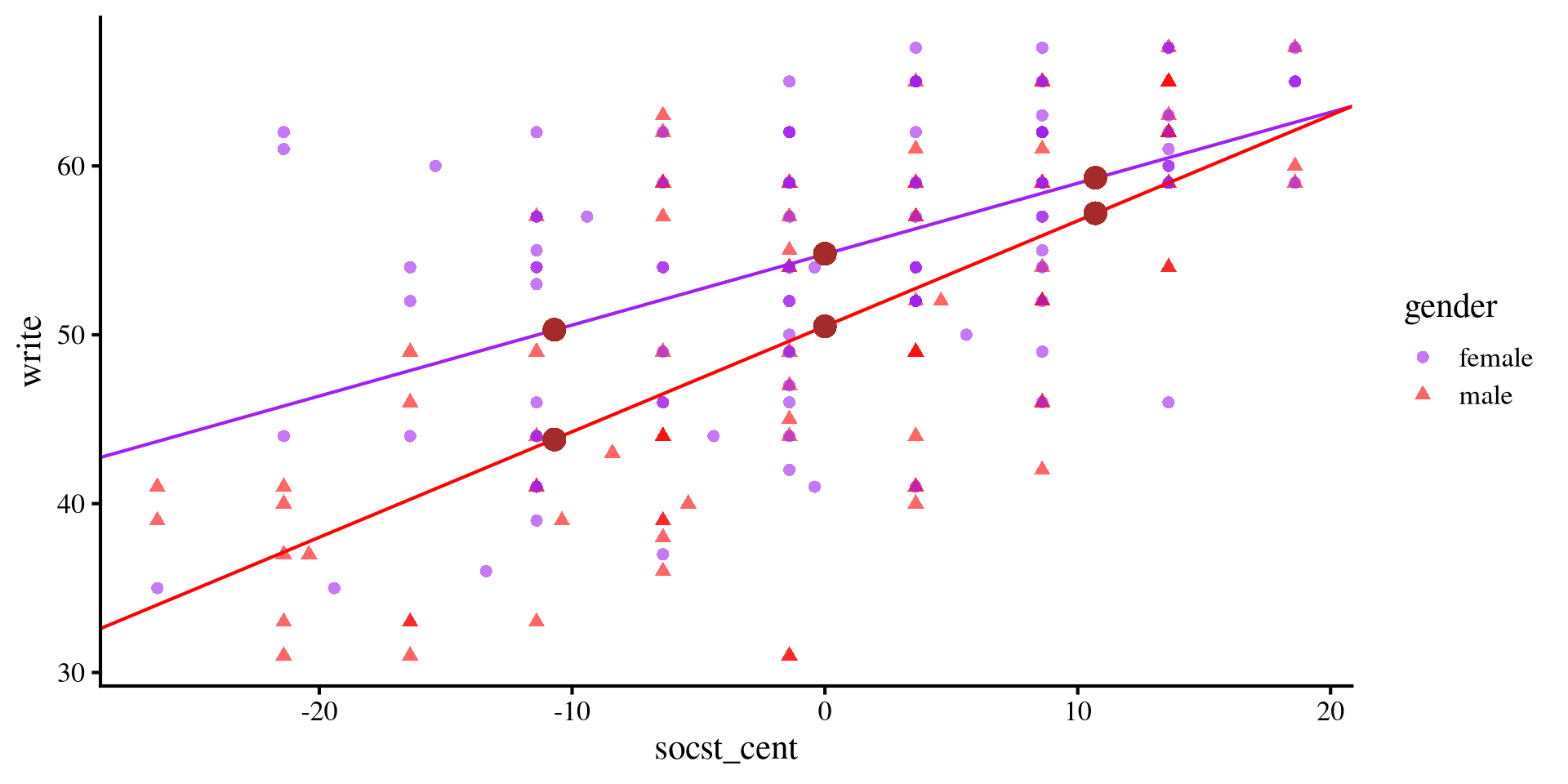

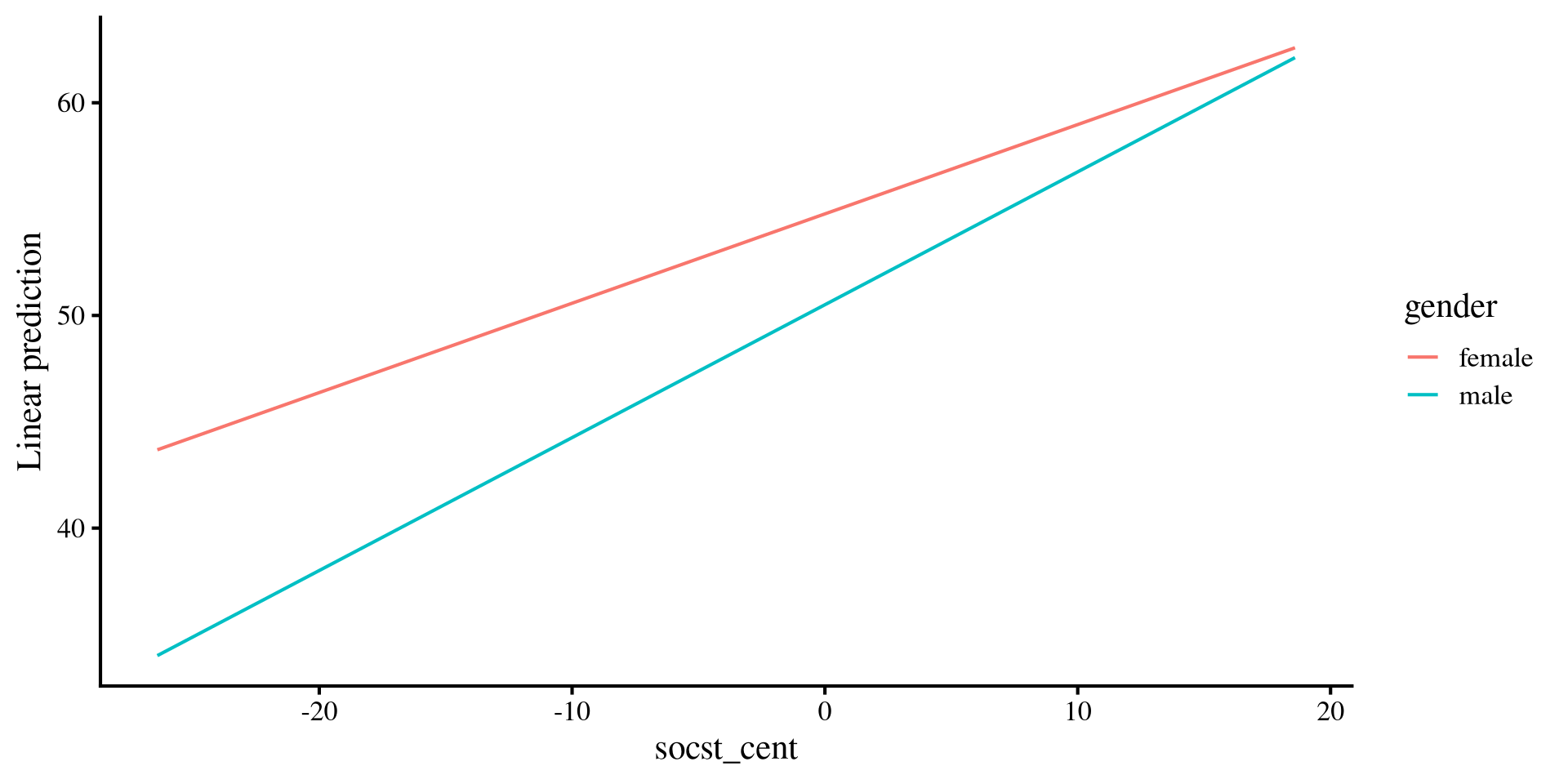

Visualizing write ~ gender + socst

socst and write should be the same for both groups.

Plot code

ggplot(hsb2, aes(x = socst_cent, y = write, colour = gender)) +

geom_point(aes(shape = gender), alpha= .6) +

geom_abline(intercept = coef(reg_both)[1], slope = coef(reg_both)[3], col = "purple") +

geom_abline(intercept = coef(reg_both)[1] + coef(reg_both)[2], slope = coef(reg_both)[3], col = "red") +

scale_color_manual(values=c( "purple", "red"))

Adding the Interaction

If we think that the relationship between socst and write should change depending on gender, we can test this hypothesis by including an interaction effect.

Call:

lm(formula = write ~ gender * socst_cent, data = hsb2)

Residuals:

Min 1Q Median 3Q Max

-18.6265 -4.3108 -0.0645 5.0429 16.4974

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 54.77557 0.69162 79.199 < 2e-16 ***

gendermale -4.27120 1.02545 -4.165 4.66e-05 ***

socst_cent 0.42007 0.06780 6.195 3.36e-09 ***

gendermale:socst_cent 0.20473 0.09537 2.147 0.0331 *

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 7.212 on 196 degrees of freedom

Multiple R-squared: 0.4299, Adjusted R-squared: 0.4211

F-statistic: 49.26 on 3 and 196 DF, p-value: < 2.2e-16we add the \(.2 \times \mathrm{gender} \times \mathrm{socst}\) term to the equation from two slides ago.

-

\(54.78\) is still the expected mean

writescore of females, whensocstis at its mean value. -

\(-4.28\) is the expected mean difference in

writescore for males compared to females, whensocstis controlled. -

\(.42\) is the expected mean increase in

writefor each unit increase insocstfor females. -

\(.2\) is the expected difference in slope between

writeandsocstfor the males.

Interpreting the Interaction Term

When we have a categorical moderator, interpreting the interaction terms boils down at looking how the slopes of the groups change.

Equation for Males

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .42 \times \mathrm{socst} + .2 \times \mathrm{gender} \times \mathrm{socst}\]

↓

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .42 \times \mathrm{socst} + .2 \times 1 \times \mathrm{socst}\]

↓

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .42 \times \mathrm{socst} + .2 \times \mathrm{socst}\]

↓

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .62 \times \mathrm{socst}\]

Equation for Females

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .42 \times \mathrm{socst} + .2 \times \mathrm{gender} \times \mathrm{socst}\]

↓

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .42 \times \mathrm{socst} + .2 \times 0 \times \mathrm{socst}\]

↓

\[\widehat{\mathrm{write}} = 54.78 - 4.28 \times \mathrm{gender} + .42 \times \mathrm{socst}\]

So, we expect the relation between write and socst (i.e., the slope of socst) to be \(.2\) larger for males.

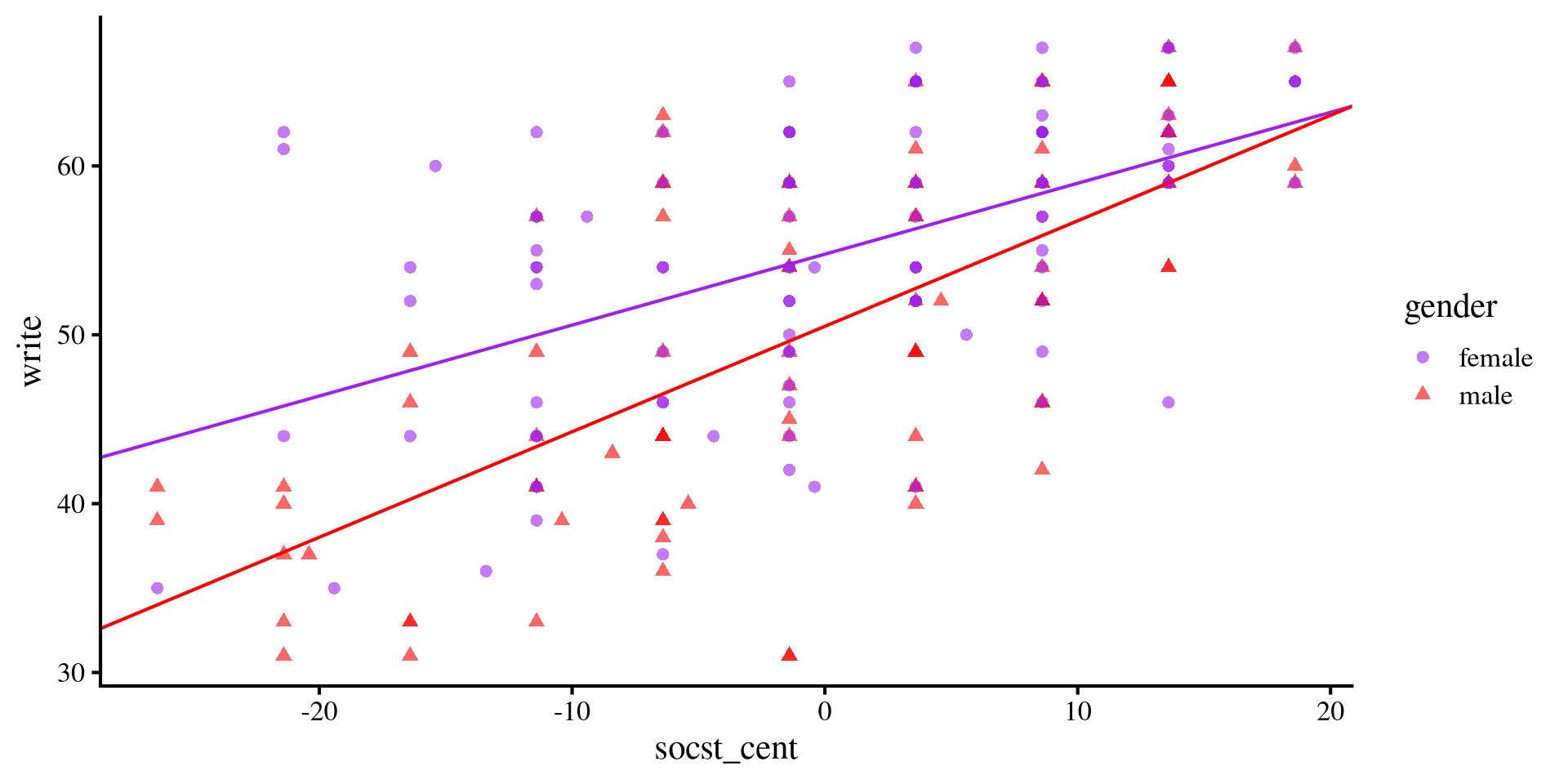

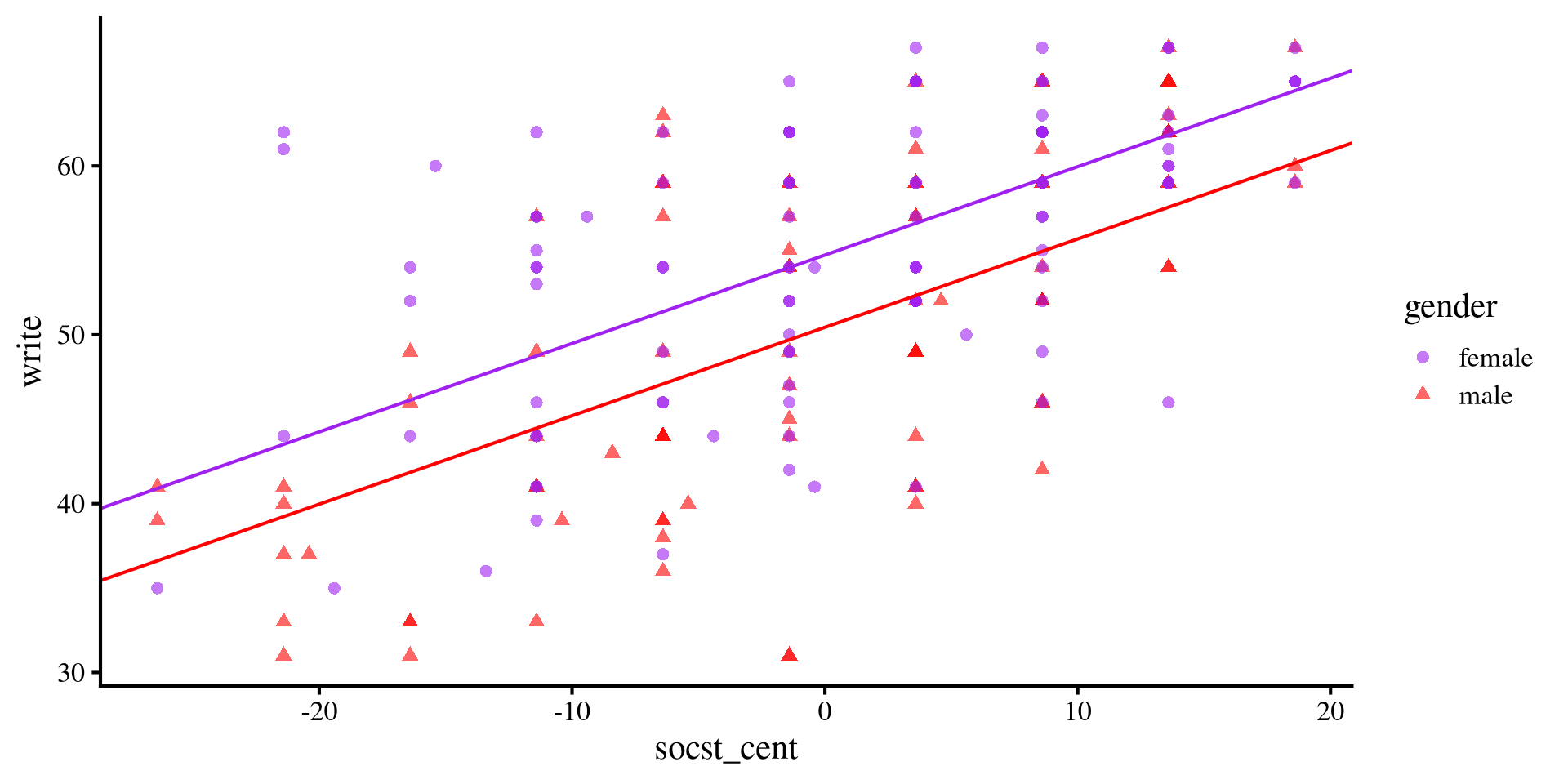

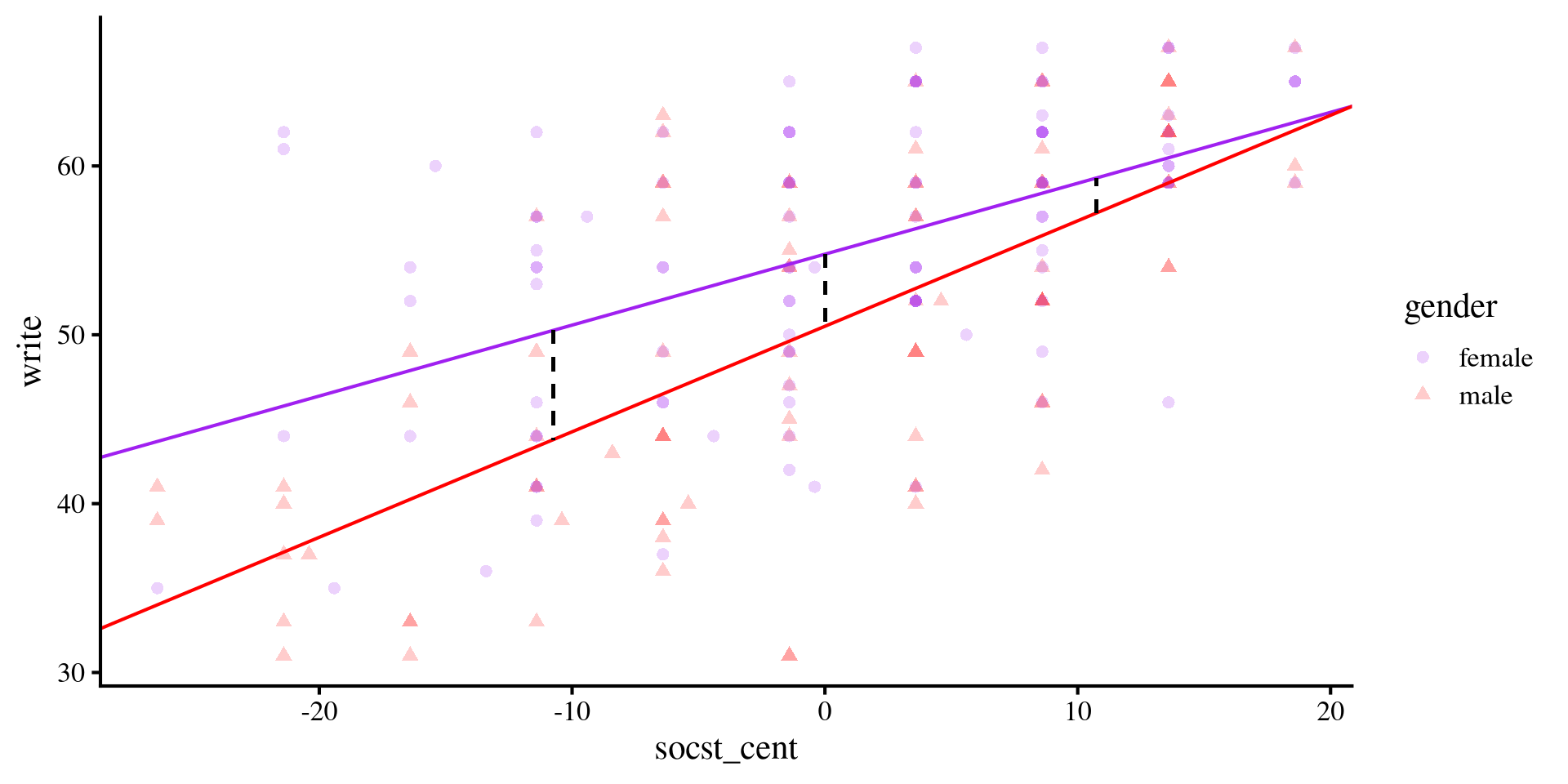

Visualizing write ~ gender * socst

males is steeper. Although at lower levels of socst, females do considerably better, the gap closes more and more as socst increases.

Plot code

ggplot(hsb2, aes(x = socst_cent, y = write, colour = gender)) +

geom_point(aes(shape = gender), alpha= .6) +

geom_abline(intercept = coef(reg_int)[1], slope = coef(reg_int)[3], col = "purple") +

geom_abline(intercept = coef(reg_int)[1] + coef(reg_int)[2], slope = coef(reg_int)[3] + coef(reg_int)[4], col = "red") +

scale_color_manual(values=c( "purple", "red"))

Plot code

ggplot(hsb2, aes(x = socst_cent, y = write, colour = gender)) +

geom_point(aes(shape = gender), alpha= .6) +

geom_abline(intercept = coef(reg_both)[1], slope = coef(reg_both)[3], col = "purple") +

geom_abline(intercept = coef(reg_both)[1] + coef(reg_both)[2], slope = coef(reg_both)[3], col = "red") +

scale_color_manual(values=c( "purple", "red"))

Plot code

mean_female <- mean(hsb2$write[hsb2$gender == "female"])

mean_male <- mean(hsb2$write[hsb2$gender == "male"])

ggplot(hsb2, aes(x = gender, y = write)) +

geom_point() +

geom_hline(aes(yintercept = mean(mean_female), color = "Female"),

linetype = "dashed") +

geom_hline(aes(yintercept = mean(mean_male), color = "Male"),

linetype = "dashed") +

scale_color_manual(values = c("Female" = "blue", "Male" = "red")) +

labs(color = "Means")

Simple Slopes With emmeans

Whenever categorical variables are involved, the emmeans package likely has some functions to summarize results. For interaction between continuous and categorical variables, the emtrends() function is very helpful!

emtrends() function also provides confidence intervals for the slopes.

gender socst_cent.trend SE df lower.CL upper.CL

female 0.420 0.0678 196 0.286 0.554

male 0.625 0.0671 196 0.493 0.757

Confidence level used: 0.95 -

reg_intis the regression model. -

~followed bygenderimplies that we want the slopes for each level of thegendervariable. -

var =followed by"socst_cent"is the continuous predictor we want to get slopes for.

emmeans package with interactions.

Predicted Means

socst_cent, but we can also shift our focus to means and mean differences. In our case, the emmeans package can be used to calculate predicted means of groups at different values of the continuous variable.

socst_cent at which we want to calculate means. We do this by creating a list object containing the name of the continuous variable and the values at which we want to calculate means.

reg_int), and use ~socst_cent*gender to tell the function that we want the means of each level of gender by the specified values of socst_cent:

socst_cent gender emmean SE df lower.CL upper.CL

-10.7 female 50.3 1.030 196 48.2 52.3

0.0 female 54.8 0.692 196 53.4 56.1

10.7 female 59.3 0.979 196 57.4 61.2

-10.7 male 43.8 1.020 196 41.8 45.8

0.0 male 50.5 0.757 196 49.0 52.0

10.7 male 57.2 1.070 196 55.1 59.3

Confidence level used: 0.95 write score for males and females at -/+ 1 SD and mean of socst score.

Predicted Means Graphically

The previous slide may seem intimidating, but the means of write score that we just calculated (brown dots in the plot) are simply the values of \(y\)-axis given some values of \(x\) according to the regression line of each groups.

socst_cent gender emmean SE df lower.CL upper.CL

-10.7 female 50.3 1.030 196 48.2 52.3

0.0 female 54.8 0.692 196 53.4 56.1

10.7 female 59.3 0.979 196 57.4 61.2

-10.7 male 43.8 1.020 196 41.8 45.8

0.0 male 50.5 0.757 196 49.0 52.0

10.7 male 57.2 1.070 196 55.1 59.3

Confidence level used: 0.95 Plot code

ggplot(hsb2, aes(x = socst_cent, y = write, colour = gender)) +

geom_point(aes(shape = gender), alpha= .6) +

geom_abline(intercept = coef(reg_int)[1], slope = coef(reg_int)[3], col = "purple") +

geom_abline(intercept = coef(reg_int)[1] + coef(reg_int)[2], slope = coef(reg_int)[3] + coef(reg_int)[4], col = "red") +

scale_color_manual(values=c( "purple", "red")) +

geom_point(aes(x = -10.7, y = 50.3), color = "brown", size = 4) +

geom_point(aes(x = -10.7, y = 43.8), color = "brown", size = 4) +

geom_point(aes(x = 0, y = 54.8), color = "brown", size = 4) +

geom_point(aes(x = 0, y = 50.5), color = "brown", size = 4) +

geom_point(aes(x = 10.7, y = 59.3), color = "brown", size = 4) +

geom_point(aes(x = 10.7, y = 57.2), color = "brown", size = 4)

Testing Mean Differences

write score for both groups at different levels of socst score, the logical extention is to test whether these means are significantly different from each other.

We use the contrast() function from the emmeans package (careful! not the contrasts() function 🙈):

socst_cent = -10.7:

contrast estimate SE df t.ratio p.value

female - male 6.47 1.45 196 4.473 <.0001

socst_cent = 0.0:

contrast estimate SE df t.ratio p.value

female - male 4.27 1.03 196 4.165 <.0001

socst_cent = 10.7:

contrast estimate SE df t.ratio p.value

female - male 2.07 1.45 196 1.428 0.1550write is significant at lower values of socst, but is no longer significant at high values of socst.

Mean differences Graphically

socst simply mean testing whether the dashed black lines are significantly different from 0 in length. The dashed lines, representing the distance between the two regression lines becomes smaller and smaller as socst increases.

It’s a matter of perspective

You may have noticed that first we looked at (1) whether the slope of socst changed depending on gender, and then we (2) looked at whether there were mean differences in mean write score for gender depending on different values of socst. In other words, we swapped the variable that we were treating as the moderator. This highlights how from a statistical perspective there is no difference between a moderator and a focal predictor. You decide how two present the results and frame the two variables.

Plot code

# save summary for mean differences

sum <- summary(means)

ggplot(hsb2, aes(x = socst_cent, y = write, colour = gender)) +

geom_point(aes(shape = gender), alpha= .2) +

geom_abline(intercept = coef(reg_int)[1], slope = coef(reg_int)[3], col = "purple") +

geom_abline(intercept = coef(reg_int)[1] + coef(reg_int)[2], slope = coef(reg_int)[3] + coef(reg_int)[4], col = "red") +

scale_color_manual(values=c( "purple", "red")) +

geom_segment(y = sum[1,3], yend = sum[4,3], x = sum[4,1], xend = sum[4,1], col = "black", lty = 2) +

geom_segment(y = sum[2,3], yend = sum[5,3], x = sum[5,1], xend = sum[5,1], col = "black", lty = 2) +

geom_segment(y = sum[6,3], yend = sum[3,3], x = sum[3,1], xend = sum[3,1], col = "black", lty = 2)

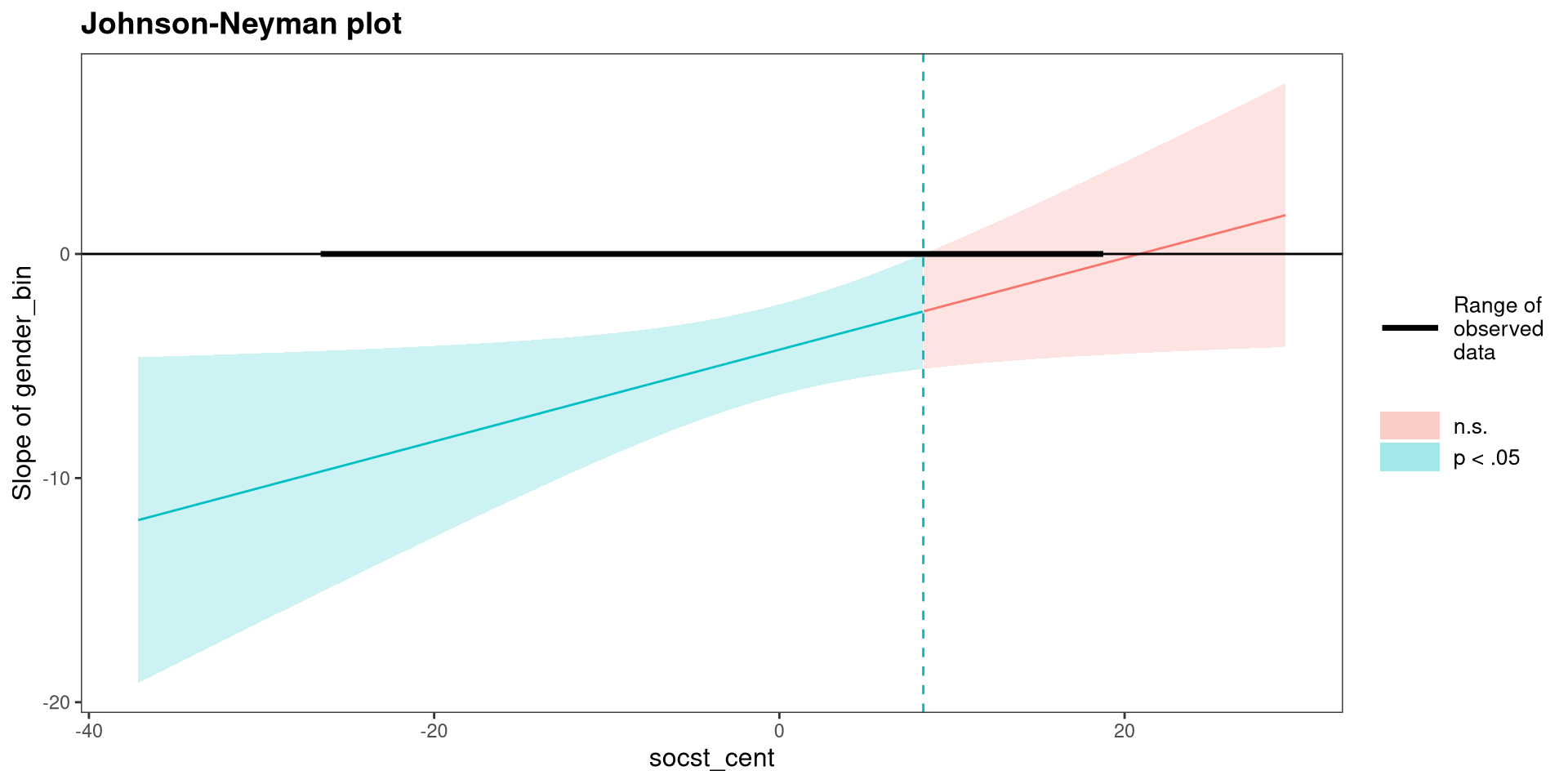

johnson-neyman Plot?

# the predictor must be a vector of 0s and 1s

hsb2$gender_bin <- ifelse( hsb2$gender == "female", 0, 1)

# rerun the regression with binary gender

reg_int_2 <- lm(write ~ gender_bin * socst_cent, data = hsb2)

# save summary for mean differences

interactions::johnson_neyman(reg_int_2,

pred = "gender_bin",

modx = "socst_cent")

write score for each value of socst score. Consistent with the plot on the previous slide, the difference is no longer significant when socst is around 10 or more.

Plotting with emmip()

We can use the emmip() to plot the slopes for both groups.

emmip() the range of the continuous variable for which want to plot slopes. here I choose the minimum and maximum of socst_cent.

ggplot 🫣, although I understand that may not be everyone’s cup of tea

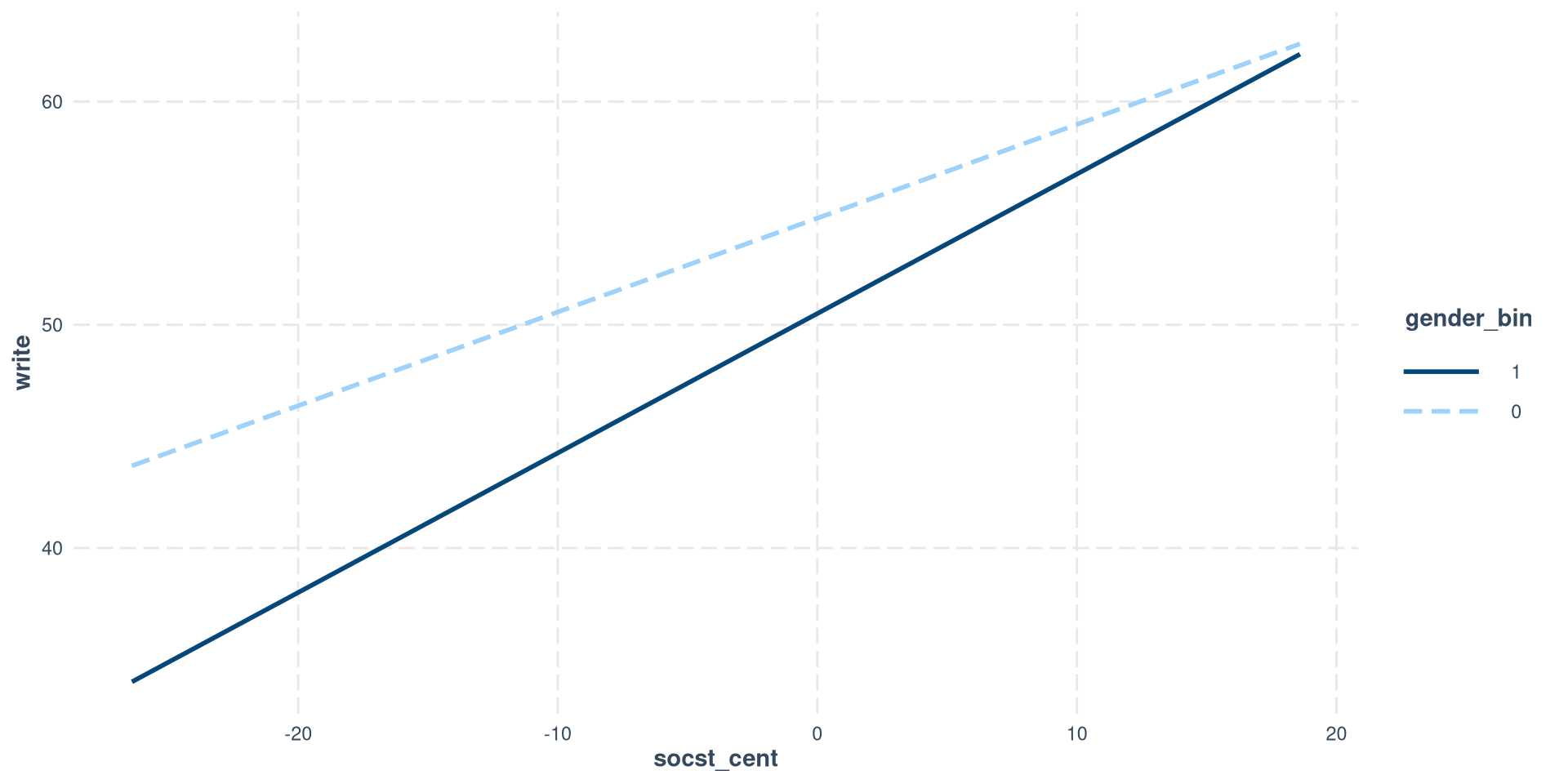

Plotting with interact_plot()

In our case, it is slightly simpler to use the interact_plot() function, but, unlike emmip(), it requires a binary variable and only works with 2 categories.

Notice that I am using the regression model with the binary version of gender because the interactions package does not play nice factor variables.

So, you have multiple options for plotting simple slopes. Still, my go to is just using ggplot because it gives me the most freedom (as you can tell from earlier slides).

References

PSYC 7804 - Lab 11: Interactions With Categorical Predictors